LRU Cache in Javascript

if you're not familiar with a linked list and/or a hash map, do go and refresh your memory again

- linked list: my own notes

- hash map: my own notes, as well as a use case of a hash map.

Now let's look into how these two bits fit together.

What is an LRU Cache?

An LRU Cache is a cache (thing you use to temporary store memory, with a preset capacity) that removes items from the cache when it's full, by taking away the Least Used item. this LRU part is what is commonly called a Cache Eviction Policy.

LRU is just one of the many algorithms you can use to evict items from your cache. here are so, so many more.

uses of an LRU Cache

- iphone's top 4 apps (shows the most recently used apps. apps that are less recently used than the top 4 are 'evicted' from the list)

- any other thing that has a 'recently used' list in them (photoshop recent items, vscode recently opened etc)

- it seems most caches start with an LRU implementation, meaning and LRU cache fits the use cases of a majority of use cases for a cache (it's the best default)

why a linked list and a hash map?

a linked list provides insertion and deletion at the speed of O(1) (like, the fastest possible speed in programming terms, because whatever the size of the list, it takes 1 step to do the given action).

however, using linked lists mean that access and search take O(n) speed to perform. (meaning it could potentially need to loop through the entire list once to do something, hence n steps)

we can help speed this up by sticking the linked list into a hash map (a dictionary)! this means search is now also O(1).

the easiest way to sum this up would be:

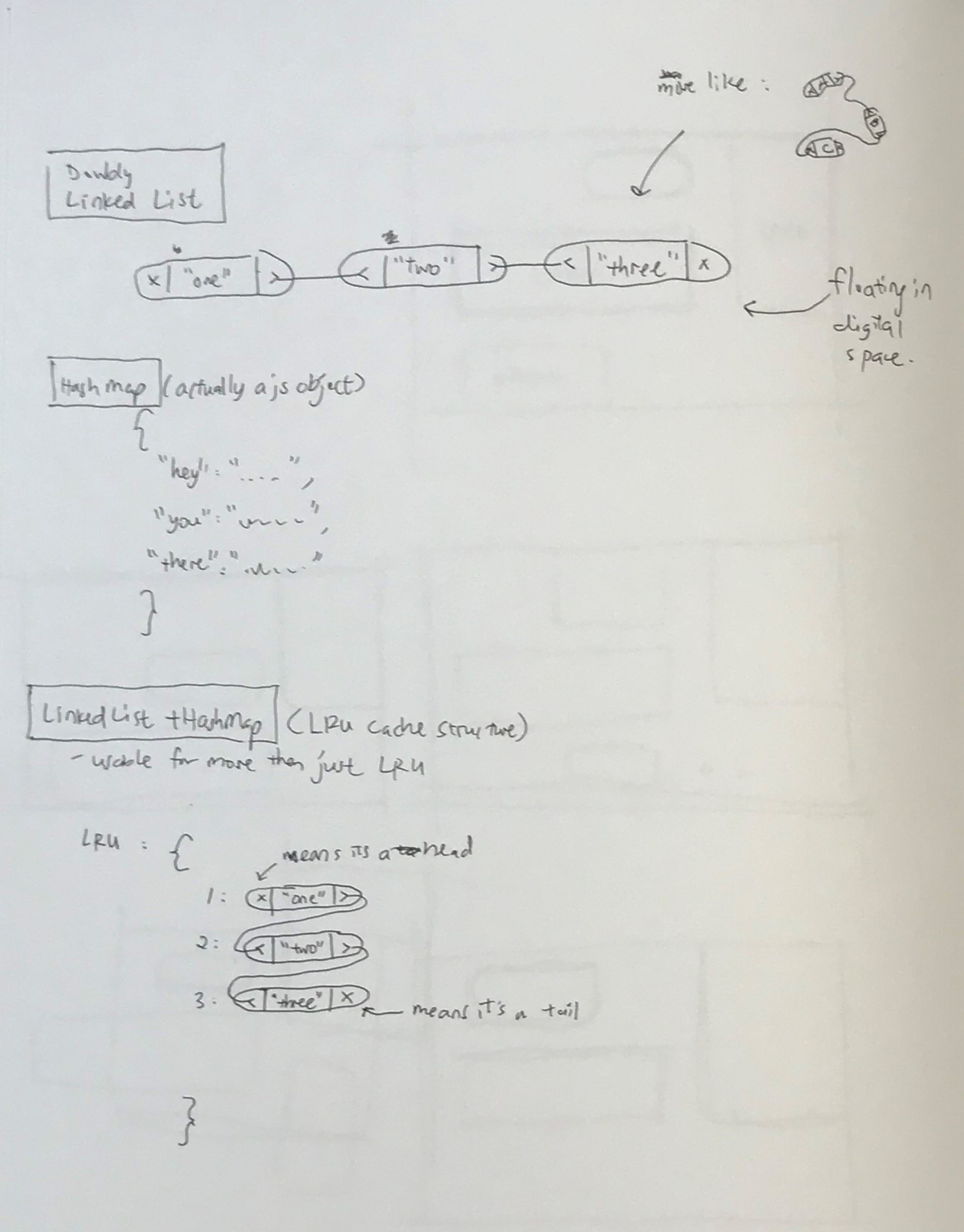

- a doubly linked list has two pointers (references)

- a doubly linked list stored in a hash map has 3 pointers (prev, next, hash index)

- with this index, now you can access any part of the linked list in O(1) time

ref: speedcharts (big O)

the code

here are some snippets that i found a bit confusing, so i even drew some diagrams to explain what's happening

overview of what happens

description WIP

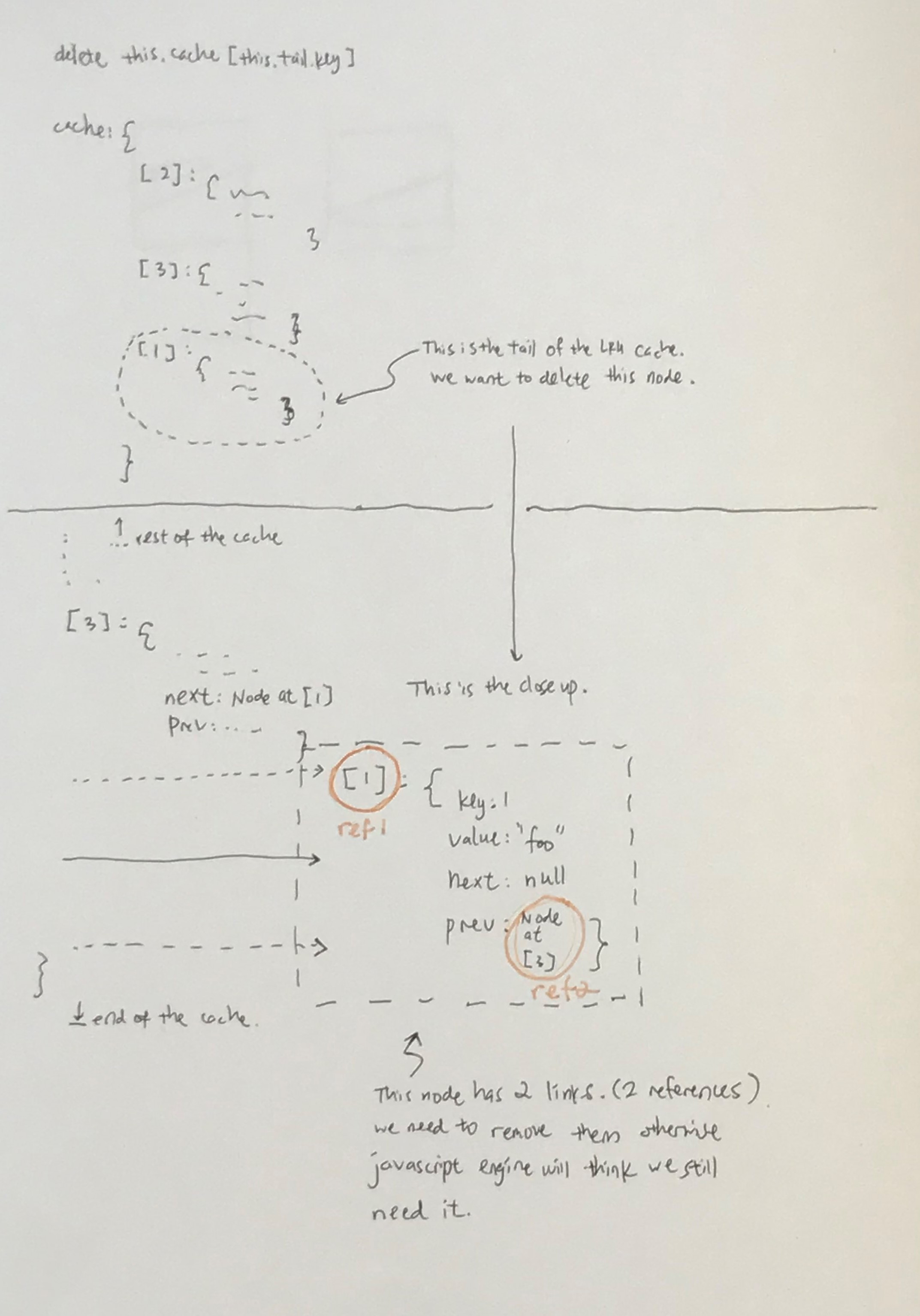

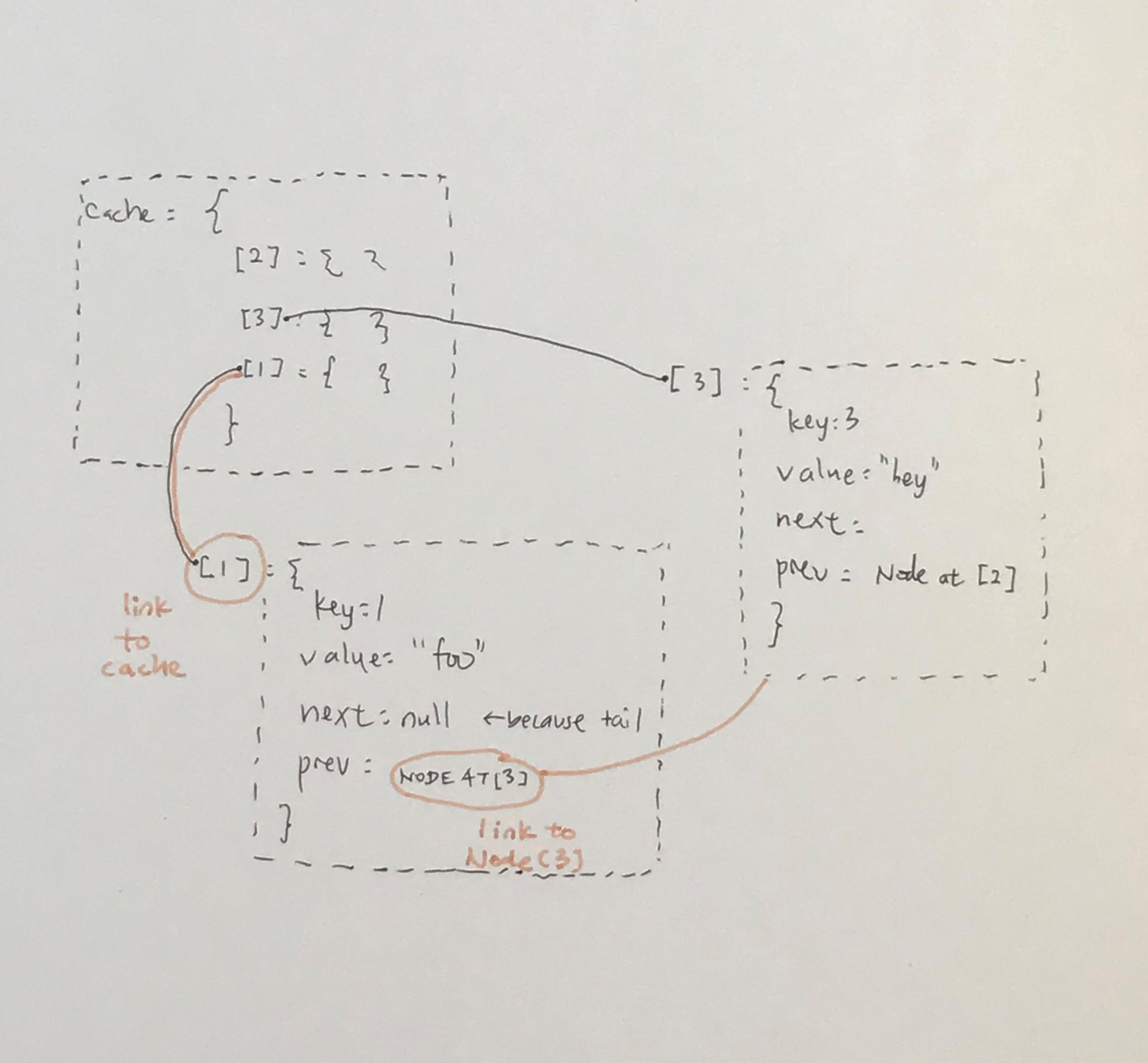

detail of what needs to happen to the node

description WIP

here's the code in full

// ----------------------------------

// least recently used (LRU) cache

// ----------------------------------

//

// first make a cache that has a limit

// (otherwise the eviction policy wouldn't trigger would it)

// then implement LRU cache eviction

// main: https://learnjswith.me/implement-an-lru-cache-in-javascript/

// sub: https://medium.com/dsinjs/implementing-lru-cache-in-javascript-94ba6755cda9

// 1. create data structure with initial limit

// 2.

// - add functionality to adding cache

// - get an element from cache

// - remove the last used element from the cache

// - iterating through cache

/*

requirements

the cache has to have the following functions:

- get()

- O(1)

- put()

- O(1)

- both get() and put() has to update the order of the memory to become 'most recent'

the cache has to have a max limit of n

- when the cache goes over the limit of n:

- evict the Least Recently Used data in the memory

*/

export enum CACHE_TYPE {

LRU = 'lru',

}

export const Cache = (type = CACHE_TYPE.LRU) => {

class Node {

key: number

value: any

next: Node | null

prev: Node | null

constructor(key, value, next = null, prev = null) {

this.key = key

this.value = value

this.next = next

this.prev = prev

}

}

/*

LRU = doubly linked list + hash map

*/

class LRU {

size: number

limit: number

head: Node | null

tail: Node | null

cache: { [key in Node['key']]: Node }

/*

----------------------------------------------

Least Recently Used (LRU) caching algorithm

----------------------------------------------

| O(1) to read/write

| takes up O(n) space

|

| can be imagined as a linked list + hash map

| js objects ~= a hash map and take O(1) time to lookup

| linked list allows O(1) read/writes

|

| in this implementation, 'reordering' the node means

| removing the old node from the list and adding a carbon

| copy of the node to the front of the list.

|

| linked list used where both ends mean something

| head = most recently used

| tail = least recently used

|

| RULES

| -----

| 1. do every insertion at the head (all writes only write to head)

| 2. on every read/update operation, detach node from its position and attach to the head O(1)

| 3. when cache exceeds limit, remove node from tail

|

| NOTES

| -----

| ** The JavaScript delete operator removes a property from an object; if no more references to the same property are held, it is eventually released automatically.

| the above actually means 'delete' only removes a property, it doesn't DELETE the property (in this instance, the node). if it's still got references somewhere else,

| e.g. in other nodes' .next or .prev properties. if everything is removed, it will be 'truly' removed eventually by the javascript engine (meaning removed from RAM).

*/

constructor(limit = 5) {

// INITIAL SETTING

this.limit = limit

this.size = 0

this.head = null

this.tail = null

this.cache = {}

}

reset(limit = 5) {

// RESET TO INITIAL SETTING

this.limit = limit

this.size = 0

this.head = null

this.tail = null

this.cache = {}

}

read(key: number) {

if (this.cache[key]) {

// 1. get value from cache

const value = this.cache[key].value

// 2. reorder this node to the top of the list

const node = new Node(key, value) // assign to new node

this.remove(key) // remove from prev node

this.setHead(node) // set head of node to new node

// 3. return the value

return value

}

}

write(key: number, value: any) {

// write == either create or update

// also if cache is full, remove one from tail

// 1. is this new or existing key

if (this.cache[key]) {

// 1a. if existing key

this.remove(key) // remove so we can setHead at 3

} else {

// 1b. if cache is full

if (this.size >= this.limit) {

// remove all traces of the current tail, and set it to the node before the tail (second last node)

delete this.cache[this.tail.key] // unlink the node from the cache (removed old tail's reference**) <-- refer to notes on top

this.size-- // update size of cache manually

this.tail = this.tail.prev // set the tail of the list to the second last item in the list (removes old tail's reference**)

this.tail.next = null // set the new tail's next to null (removes old tail's reference**)

}

}

// 2. set head to passed key value

const node = new Node(key, value)

this.setHead(node)

}

private setHead(node: Node) {

// 0. given the node that's passed in, ...

// 1. setup node's links to the rest of the linked list

node.next = this.head // because new node is now head, the next node to this is the OLD head (which is this.head)

node.prev = null // because this is the HEAD

// 2. point the cache's head and tail to the right places

// 2a. if head exists (which is ALMOST ALL THE TIME except the FIRST TIME on a new cache)

if (this.head !== null) {

// if it exists, we need to put the new node BEFORE the head (to make this node the new head)

this.head.prev = node

}

this.head = node // set incoming node as head

// 2b. if tail does not exist (which is only the FIRST TIME)

if (this.tail === null) {

this.tail = node // set node to end here, essentially making this a list of length 1

}

// 3. link the node to the cache

this.size++

this.cache[node.key] = node

}

remove(key: number) {

if (!this.cache[key]) {

console.log('key not found in cache!')

return

}

// 1. get the node that the user wants to remove

const node = this.cache[key] // get the node

const nodeIsHead = node.prev === null

const nodeIsTail = node.next === null

// 2. update head and tail

// compared to example, this is flipped for better readability

// 2a. deal with .next references

if (nodeIsHead) {

/*

|----------------------

| reassigning a head

| ---------------------

| happens if node is the HEAD

|

| this takes current node's next node

| (because the current node is going to be deleted)

| and makes it the new head

|

| x | 'node' (current, head) | >----< | 'bar' | >--- .....

| x | 'bar' (new head) | >--- .....

*/

this.head = node.next

} else {

/*

|-----------------------

| rewiring next nodes

| ----------------------

| happens on if this node is not the HEAD

|

| this takes the node before in the list,

| grab the .next reference,

| and sticks it to the thing after the current node in the list

|

| ..... < | 'baz' | >----< | 'node' (current, not head) | >----< | 'bar' | >--- .....

| ..... < | 'baz' | >-(this part)------------------------------< | 'bar' | >--- .....

|

| refer to 'rewiring prev nodes' to understand what (this part) actually means

*/

node.prev.next = node.next

}

// 2b. deal with .prev references

if (nodeIsTail) {

/*

|----------------------

| reassigning a tail

| ---------------------

| happens if node is the HEAD

|

| this takes current node's next node

| (because the current node is going to be deleted)

| and makes it the new head

|

| ..... < | 'foo' | >----< | 'node' (current, tail) | x

| ..... < | 'foo' | x

*/

this.tail = node.prev

} else {

/*

| ----------------------

| rewiring prev nodes

| ----------------------

| happens if node is not a TAIL

|

| ..... < | 'baz' | >----< | 'node' (current, not tail) | >----< | 'bar' | >--- .....

| ..... < | 'baz' | >------------------------------(this part)-< | 'bar' | >--- .....

*/

node.next.prev = node.prev

}

// 3. delete the node (finally)

delete this.cache[key]

this.size--

}

toJSON() {

// get ALL THE THINGS

let json = []

let node = this.head

while (node) {

let data = {

key: node.key,

value: node.value,

}

json.push(data)

node = node.next

}

return json

}

}

const cacheOptions = {

[CACHE_TYPE.LRU]: LRU,

// more cache types

}

return cacheOptions[type]

}

// SOME TESTS

const LRUCache = Cache(CACHE_TYPE.LRU)

const lru = new LRUCache(3) // cache of size 3

lru.write(1, 'first')

lru.write(2, 'second')

lru.write(3, 'third')

lru.write(4, 'fourth') // this should evict (1, 'first')

console.log(lru.read(1)) // check, this should be gone (return undefined)

console.log(lru.toJSON()) // read the entire cache (without affecting order) // this is debug mode

console.log(lru.read(2)) // check, this should return 'second', and

console.log(lru.toJSON()) // the order (by most recent) should now be 2,4,3