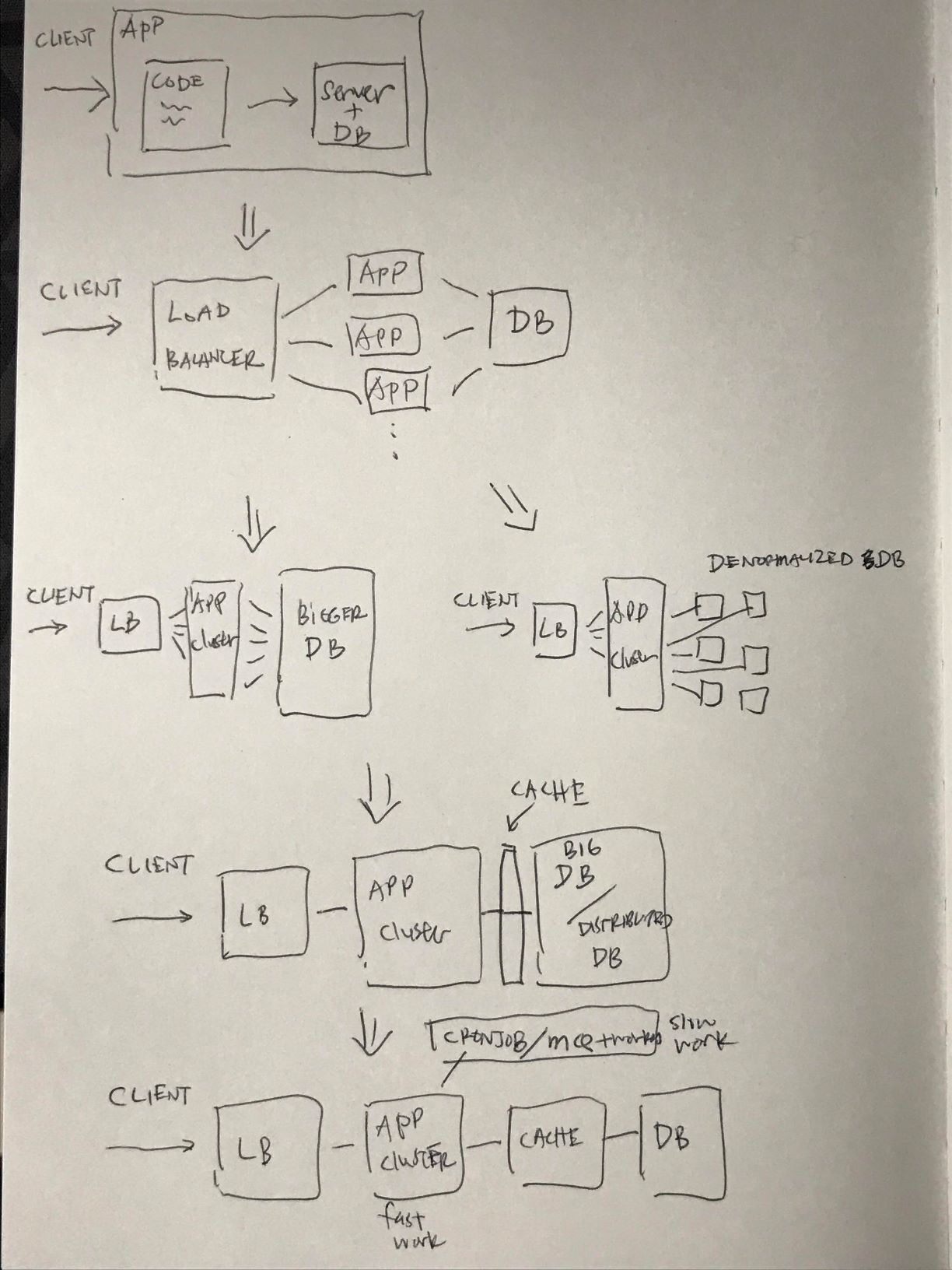

A series of events that lead to scaling up an app

from here: https://www.lecloud.net/tagged/scalability

- you have an app that does something. you can store your app 'state' in a json file on a server. or a csv. whatever.

- you have a lot of users, you can't serve them with one server

- you place a load balancer in front to distribute load

- you have multiple copies of your app across servers

- now sessions (your app state) need to be stored in a centralized data store. that's a database.

- your app now works with a load balancer and many replicas of apps, and they talk to a database.

- somewhere down the road your app gets slower and slower. the reason: your database.

- there are two paths to take:

- stick to MySQL and keep it running, using a master-slave replication (or other methods), where you read from slaves, and write to master. then you upgrade your master with a lot of RAM. eventually, you need to 'shard' and 'denormalize' and do 'SQL tuning'

- denormalize immediately, and do no joins in your database queries (or minimal). then either stick with MySQL and use it like NoSQL, or use MongoDB or CouchDB. joins will now need to be done in application code

- after your do these, if works for a while, when eventually your database requests will again be slower and slower. now you need to introduce a cache.

- cache means in-memory cache like Memcached or Redis. never do file-based caching, it makes cloning and auto-scaling your servers a pain.

- a cache sits between your application and data storage, and is a simple key-value store. when your application has to read data it should first try to retrieve from the main data store.

- there are two patterns to caching your data:

- Cached Database Queries, or "Cache the Request" (old way) - whenever you do a query to your db, your store the result dataset in a cache. A hashed version of your query is the cache key. This pattern has several issues, main one being expiration. When one piece of data changes (e.g. a table cell), you need to delete all cached queries that may include that table cell. note: this can be mitigated by quick expiry, depending on use case

- Cached Objects, or "Cache the Result" (new way, strongly recommended by the guy in the linked article) - see your data as an object, and let the query make all the necessary multiple-requests to assemble the data array, and then cache that data array. note: find out more

- caching objects makes async processing possible. you can have workers to process and create the object needed, and store it in the cache, and always let your app get it from the cache, and never need to touch the database.

- with the second one, you need to pick what to cache. some examples:

- user sessions (never in the database)

- fully rendered blog articles

- activity streams (per user. they don't change based on direct user input)

- user

<->friend relationships

- the next part in scaling: Asynchronism. example quoted from the article:

This 4th part of the series starts with a picture: please imagine that you want to buy bread at your favorite bakery. So you go into the bakery, ask for a loaf of bread, but there is no bread there! Instead, you are asked to come back in 2 hours when your ordered bread is ready. That’s annoying, isn’t it?

To avoid such a “please wait a while” - situation, asynchronism needs to be done. And what’s good for a bakery, is maybe also good for your web service or web app.

- there are two ways / paradigms asynchronism can be done:

- 'bake the breads at night and sell them in the morning' - do time consuming work in advance (you have to pick and choose) and serve the finished work in low request time. examples:

- data queries done realtime, but scheduled reports of the same queries done async and delivered every morning.

- cronjobs for website pages, to be hosted on cdns.

- sometimes there are special requests, like a birthday cake with "Happy birthday, Steve" on it. - a web service that handles tasks asynchronously. examples:

- message queues

- long polling

- 'bake the breads at night and sell them in the morning' - do time consuming work in advance (you have to pick and choose) and serve the finished work in low request time. examples: